The Beginnings of Computer Technology

Computer technology has come a long way since its humble beginnings. It all started in the early 19th century with the invention of the mechanical calculator by Charles Babbage. Babbage’s ideas laid the foundation for modern computer systems.

However, it wasn’t until the mid-20th century that electronic computers started to emerge. The development of the first electronic digital computer, called the ENIAC (Electronic Numerical Integrator and Computer), marked a significant milestone in computer technology. This massive machine, which weighed over 30 tons and occupied an entire room, was capable of performing calculations at a remarkable speed, revolutionizing the fields of science and engineering.

The subsequent decades witnessed rapid advancements in computer technology. Integrated circuits were invented, leading to the development of smaller and more powerful computers. This trend continued with the introduction of microprocessors, which brought computing power to individual users and laid the foundation for personal computers.

The advent of personal computers had a profound impact on society. Suddenly, individuals had access to computing power and could perform tasks that were previously unimaginable. The introduction of graphical user interfaces (GUI), such as Apple’s Macintosh and Microsoft’s Windows, further democratized computer usage and made it accessible to a wide range of users.

The rise of the internet in the 1990s transformed computer technology once again. The internet connected computers across the globe, enabling the seamless exchange of information and the birth of the digital age. The World Wide Web made it possible for people to access vast amounts of information with just a few clicks, revolutionizing communication, commerce, and entertainment.

As computer technology advanced, so did the demand for faster and more efficient machines. This led to the development of supercomputers, capable of performing complex calculations at unparalleled speeds. These systems play a crucial role in scientific research, weather forecasting, and simulating complex phenomena.

With the advent of cloud computing, computer technology took another leap forward. Cloud computing allows users to access resources and services over the internet, eliminating the need for expensive hardware and software installations. This has revolutionized businesses, allowing them to scale and adapt quickly to changing market conditions.

In recent years, computer technology has expanded into new frontiers, such as artificial intelligence and machine learning. These fields involve developing computer systems that can learn, reason, and make decisions, ushering in an era of intelligent machines that can perform tasks traditionally done by humans.

Types of Computer Technology

Computer technology encompasses a wide range of devices and systems that enable us to process, store, and communicate information. This section explores the various types of computer technology that have become integral to our daily lives.

1. Personal Computers (PCs): Personal computers are the most common type of computer technology used by individuals. These include desktops and laptops, which are equipped with powerful processors, ample memory, and storage capacity. PCs are versatile tools that can handle a wide range of tasks, from word processing and internet browsing to video editing and gaming.

2. Mobile Devices: With the advent of smartphones and tablets, mobile devices have become a prominent form of computer technology. These handheld devices offer high-performance computing capabilities, allowing users to access information, communicate, and perform various tasks on the go. Mobile devices have transformed the way we interact with technology, enabling us to stay connected and productive wherever we are.

3. Servers: Servers are powerful computer systems designed to provide resources and services to other computers over a network. They are used to host websites, store and manage data, and facilitate communication and collaboration. Servers play a vital role in supporting businesses and organizations by ensuring reliable and secure access to information and applications.

4. Embedded Systems: Embedded systems are computer technology integrated into everyday objects and devices to perform specific functions. They are often found in appliances, vehicles, industrial equipment, and medical devices. Embedded systems are designed to be reliable, efficient, and operate in real-time, and they play a crucial role in automation and control systems.

5. Wearable Technology: Wearable technology refers to computer devices and sensors that can be worn or attached to the body. These include smartwatches, fitness trackers, and augmented reality glasses. Wearable technology enables us to monitor our health and fitness, receive notifications, and interact with digital content, blurring the lines between humans and machines.

6. Internet of Things (IoT): The Internet of Things refers to the network of interconnected devices embedded with sensors, software, and connectivity that enables them to collect and exchange data. IoT technology encompasses smart home devices, connected cars, and even smart cities. It has the potential to revolutionize industries, ranging from healthcare and transportation to agriculture and manufacturing.

7. Cloud Computing: Cloud computing technology allows users to access and utilize computing resources and services over the internet. It eliminates the need for expensive hardware and software installations, offering scalability, flexibility, and cost efficiency. Cloud computing enables businesses and individuals to store and process vast amounts of data, collaborate remotely, and run complex applications without significant infrastructure investments.

The rapid evolution of computer technology has brought about immense innovation and transformative changes in various sectors. The diverse types of computer technology mentioned above continue to shape our lives, and their impact will only continue to grow as technology advances.

Hardware Components

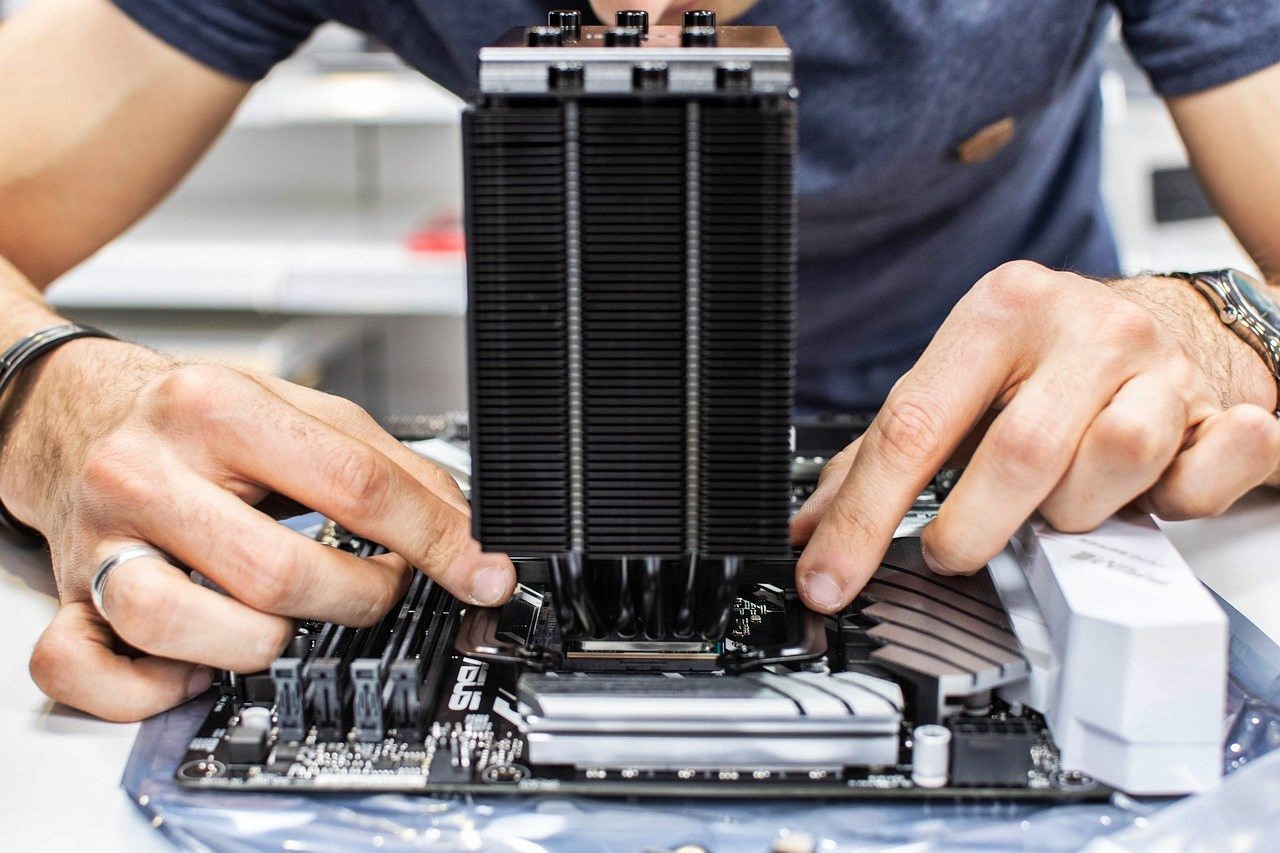

Hardware components are the physical parts that make up a computer system. They work together to enable the processing, storage, and communication of data. This section explores some essential hardware components that form the building blocks of computer technology.

1. Central Processing Unit (CPU): The CPU, also known as the processor, is the brain of the computer. It executes instructions, performs calculations, and coordinates the activities of other hardware components. The CPU’s speed, number of cores, and cache size determine the performance of a computer.

2. Random Access Memory (RAM): RAM is temporary storage that holds data and instructions for the CPU to access quickly. It provides fast and temporary storage for running applications and operating systems. The amount of RAM affects the multitasking capability and overall performance of a computer.

3. Hard Disk Drive (HDD): HDD is a non-volatile storage device that stores and retrieves data. It provides long-term storage for the operating system, software applications, and user files. Hard disk drives have large storage capacities but are relatively slower than solid-state drives (SSDs).

4. Solid-State Drive (SSD): SSD is a faster and more durable storage device compared to HDD. It uses non-volatile memory to store data and has no moving parts. SSDs are commonly used in laptops and high-performance desktops to provide faster boot times and data access speeds.

5. Graphics Processing Unit (GPU): The GPU, also known as the video card, handles the rendering and display of graphics and videos. It offloads graphics-related calculations from the CPU, allowing for faster and smoother visual experiences, especially in gaming and multimedia applications.

6. Motherboard: The motherboard is the main circuit board that connects and integrates all the hardware components of a computer. It houses the CPU, RAM slots, storage connectors, and expansion slots for additional components. The motherboard also provides communication between hardware devices.

7. Power Supply Unit (PSU): The PSU supplies electrical power to all the components in the computer. It converts the AC power from an electrical outlet to regulated DC power for the computer to operate. The wattage and efficiency of the PSU should be suitable for the computer’s power requirements.

8. Input and Output Devices: Input devices, such as keyboards and mice, enable users to enter commands and interact with the computer. Output devices, such as monitors and printers, display or produce information for the user to perceive. These devices facilitate communication between users and the computer.

9. Networking Components: Networking components, including network interface cards (NICs) and routers, enable computers to connect and communicate over networks. They facilitate data transfer between computers, allowing for internet access, file sharing, and networked applications.

10. Peripherals: Peripherals include devices that can be connected to the computer to enhance functionality or provide additional capabilities. Examples include external hard drives, USB flash drives, webcams, and speakers.

Understanding the various hardware components is crucial for choosing and assembling a computer system that meets specific needs. Each component plays a vital role in the overall performance and functionality of the computer.

Software Components

Software components are the programs and applications that enable computers to perform specific tasks. They serve as the instructions that tell the hardware components what to do. This section explores some essential software components that drive computer technology.

1. Operating System (OS): The operating system is the software that manages and controls the computer’s operations. It provides a user interface, manages system resources, and facilitates communication between software and hardware. Examples of popular operating systems include Windows, macOS, and Linux.

2. Application Software: Application software refers to programs designed to perform specific tasks or functions. Examples include word processors, spreadsheets, graphic design software, web browsers, and video editing tools. Application software enables users to complete various tasks efficiently and effectively.

3. Device Drivers: Device drivers are software components that allow computer hardware devices to communicate with the operating system. They act as intermediaries between software applications and hardware components, translating commands into instructions that the hardware can understand.

4. Utilities: Utility software provides additional tools and functionalities to enhance the computer’s performance, security, and maintenance. Examples include antivirus software, file compression tools, disk cleanup utilities, and system optimization programs.

5. Programming Languages: Programming languages are used to create software applications and programs. They provide a set of rules and syntax for writing instructions that the computer can execute. Common programming languages include Python, Java, C++, and JavaScript.

6. Compiler and Interpreter: Compilers and interpreters are software tools used in the development and execution of programs. A compiler translates the entire program into machine-readable code before execution, while an interpreter translates and executes the program line by line in real-time.

7. Middleware: Middleware is software that acts as a bridge between different software applications or components. It facilitates communication, data exchange, and interaction between different systems and platforms. Middleware is commonly used in enterprise applications and distributed computing environments.

8. Database Management Systems (DBMS): DBMS software allows users to store, organize, and manage large amounts of data efficiently. It provides mechanisms for data retrieval, storage, and manipulation, ensuring data integrity and security. Common examples of DBMS software include MySQL, Oracle, and Microsoft SQL Server.

9. Web Development Tools: Web development tools enable the design, development, and maintenance of websites and web applications. They include code editors, frameworks, content management systems (CMS), and testing tools. Web developers rely on these software components to create engaging and functional websites.

10. Artificial Intelligence (AI) Software: AI software utilizes algorithms and machine learning techniques to simulate intelligent behavior and perform tasks that traditionally require human intelligence. Examples include voice assistants, image recognition software, and recommendation systems.

Software components are constantly evolving to meet the changing demands of technology and users. Understanding the role and functionality of these components is crucial for leveraging the full potential of computer technology.

Operating Systems

An operating system (OS) is a fundamental software component that manages and controls the computer’s operations. It acts as an intermediary between users, applications, and the underlying hardware. This section delves into the importance and functionalities of operating systems in computer technology.

1. User Interface: The operating system provides a user interface that allows users to interact with the computer. This interface can be graphical, such as Windows and macOS, or command-line-based, as in Linux. The user interface enables users to perform tasks, access files, and launch applications in an intuitive and efficient manner.

2. Resource Management: One of the key functions of an operating system is resource management. It ensures that the computer’s hardware resources, such as CPU, memory, and storage, are efficiently allocated and utilized. The OS manages multiple processes and applications running simultaneously, allowing them to share resources without interfering with each other.

3. Memory Management: The operating system is responsible for managing the computer’s memory. It allocates memory to running processes, loads programs into memory, and releases memory when it is no longer needed. Memory management ensures that each application has sufficient memory to run smoothly and prevents memory conflicts.

4. File System Management: An operating system manages the organization and storage of files on the computer. It provides features such as file creation, deletion, and modification, as well as file access and permissions. The file system allows users to store, locate, and manage their data efficiently and securely.

5. Device Management: Operating systems handle communication with various hardware devices connected to the computer. They provide drivers and protocols that enable devices such as printers, scanners, and keyboards to function correctly. Device management ensures that hardware components can interact with software applications seamlessly.

6. Security and Protection: Security is a critical aspect of operating systems. They implement security measures to protect the computer and its data from unauthorized access, viruses, and other threats. This includes user authentication, data encryption, and firewall protection. Operating systems also enforce access control policies to ensure privacy and data integrity.

7. Networking: Many operating systems have built-in networking capabilities, enabling computers to connect to networks, such as the internet, and communicate with other devices. They provide protocols and services for network communication, facilitating activities like file sharing, remote access, and online collaboration.

8. Multi-Tasking and Multi-User Support: Operating systems support multi-tasking, allowing users to run multiple applications simultaneously. They manage the execution of processes, allocate system resources efficiently, and switch between tasks seamlessly. Operating systems also enable multi-user environments, allowing multiple users to log in and use the computer concurrently.

9. Error Handling and Recovery: Operating systems include error handling mechanisms to detect and respond to errors during program execution. They provide error messages, crash recovery features, and system logging to diagnose and resolve issues. Error handling ensures the stability and reliability of the system.

10. Software Compatibility: Operating systems play a crucial role in maintaining software compatibility. They provide APIs (Application Programming Interfaces) and libraries that allow software developers to create applications that can run on the operating system. This ensures that users can install and run a wide range of software on their computers.

Operating systems are the backbone of computer technology, providing the foundation for software applications and enabling hardware components to function cohesively. They play a vital role in ensuring the smooth operation, security, and efficiency of computers, making them an indispensable part of our digital lives.

Networking and Communications

Networking and communications are integral components of computer technology that enable the exchange of information and connectivity among devices. This section explores the importance and key aspects of networking and communications in the digital age.

1. Local Area Networks (LANs): LANs are computer networks that connect devices within a limited geographical area, such as an office building or a home. They facilitate the sharing of resources, such as printers and files, among connected devices. LANs utilize technologies like Ethernet and Wi-Fi to establish reliable and high-speed communication.

2. Wide Area Networks (WANs): WANs span vast geographical areas and connect multiple LANs across different locations. The internet itself is the largest example of a WAN. WANs enable communication between distant devices and facilitate the transmission of data over long distances.

3. Internet: The internet is a global network of interconnected devices, providing access to a vast amount of information and services. It enables communication and collaboration on a global scale. The internet utilizes various protocols, such as TCP/IP, DNS, and HTTP, to ensure seamless data transmission.

4. Networking Hardware: Networking hardware includes devices that facilitate network communication. These devices include routers, switches, modems, and network cables. Routers direct network traffic, switches connect multiple devices within a network, modems interface with the internet service provider, and network cables ensure data transmission between devices.

5. Network Protocols: Network protocols define the rules and procedures for communication within a network. TCP/IP (Transmission Control Protocol/Internet Protocol) is the most widely used protocol suite on the internet. It ensures reliable data transmission, IP addressing, and routing between devices.

6. Wireless Communication: Wireless communication technologies, such as Wi-Fi and Bluetooth, have revolutionized how devices connect and interact. Wi-Fi allows devices to connect to a network without the need for physical cables, providing flexible and convenient connectivity. Bluetooth enables wireless communication between devices at short distances.

7. Voice and Video Communication: Networking technology has facilitated real-time voice and video communication over long distances. Voice-over-IP (VoIP) allows making phone calls over the internet, while video conferencing enables face-to-face communication regardless of physical location. These technologies have transformed how businesses and individuals communicate and collaborate.

8. Unified Communications: Unified communications integrate various communication tools and platforms into a single, cohesive system. It combines real-time communication, such as voice and video, with messaging, email, and collaboration tools. Unified communications simplify and streamline communication, enabling efficient and effective collaboration.

9. Mobile and Wireless Networks: Mobile networks, such as 3G, 4G, and 5G, enable wireless communication on mobile devices like smartphones and tablets. These networks provide high-speed internet access, allowing users to stay connected while on the move. Mobile networks have revolutionized how people access information and interact with technology.

10. Internet of Things (IoT): The Internet of Things refers to the network of interconnected devices embedded with sensors, software, and connectivity that enables them to collect and exchange data. IoT technology allows for smart home automation, industrial monitoring, environmental sensing, and much more. IoT networks and communication protocols enable seamless integration and communication between devices and systems.

Networking and communications are the backbone of modern technology, enabling devices to connect, communicate, and share information. From local area networks to the global reach of the internet, these technologies have transformed how we communicate, collaborate, and access information in today’s digital world.

Computer Programming

Computer programming is the process of creating sets of instructions that tell a computer how to perform specific tasks. It is a vital aspect of computer technology that enables the development of software applications and the automation of various processes. This section explores the importance and key aspects of computer programming.

1. Instruction Writing: Computer programming involves writing instructions, also known as code, in a programming language. These instructions inform the computer about the specific steps and logic required to perform a task. Programming languages, such as Python, Java, C++, and JavaScript, provide a set of rules and syntax for writing code.

2. Algorithm Design: The foundation of computer programming lies in algorithm design. An algorithm is a step-by-step procedure for solving a specific problem or achieving a specific goal. Programmers analyze the problem, design algorithms, and implement them in code to provide a solution.

3. Problem Solving: Computer programming enables problem-solving by breaking complex problems into smaller, more manageable components. Programmers identify the core objectives, analyze the requirements, and design algorithms to address the challenges. They use logical and analytical thinking to develop efficient and effective solutions.

4. Software Development: Programming is an essential part of software development. Programmers work on creating software applications that solve specific problems or fulfill certain needs. They write code to implement functionalities, ensure proper data management, and create user-friendly interfaces. Software development involves various stages, including design, coding, testing, and maintenance.

5. Application Customization: Programming allows for the customization and tailoring of software applications to meet specific requirements. Programmers can modify existing applications or develop new ones to fulfill unique needs. Customization may involve adding new features, integrating with other systems, or optimizing performance.

6. Debugging and Troubleshooting: Debugging is an essential part of programming. Programmers identify and fix errors, commonly known as bugs, in the code to ensure that the program functions as intended. Troubleshooting involves identifying and resolving issues that affect the performance or functionality of the software application.

7. Collaboration and Version Control: Programming often involves collaboration, as multiple programmers may work together on a project. Version control systems, such as Git, enable programmers to manage and track changes made to the codebase. Collaboration and version control ensure efficient teamwork, seamless integration of code, and effective project management.

8. Automation and Efficiency: Programming allows for the automation of repetitive tasks. By writing code, programmers can eliminate manual efforts, increase efficiency, and save time. Automation simplifies complex processes, improves productivity, and reduces the chances of human error.

9. Software Integration: Programming enables the integration of various software systems and components. Application programming interfaces (APIs) allow different software applications to communicate and share data. Integration enhances the functionality and interoperability of software systems, enabling seamless information flow.

10. Continuous Learning and Adaptation: Programming is a dynamic field that requires continuous learning and adaptation. Programmers need to stay updated with the latest programming languages, tools, and techniques. They must be open to new concepts, practices, and paradigms to keep up with the evolving demands of technology.

Computer programming plays a pivotal role in the development of software applications and the automation of tasks. It requires logical thinking, problem-solving skills, and creativity. With programming, we can harness the power of computers to solve problems, create innovative solutions, and shape the future of technology.

Data Storage

Data storage is a fundamental aspect of computer technology that involves the retention of digital information for later use. It encompasses various methods and technologies to store and organize data effectively. This section explores the importance and different aspects of data storage.

1. Data Organization: Data storage involves organizing and structuring data in a way that facilitates efficient retrieval and management. Hierarchical systems, such as file systems, use directories and folders to store files in a structured manner. Databases employ tables, rows, and columns to organize and relate data based on specific criteria.

2. Storage Media: Storage media, both physical and virtual, are used to store data. Physical storage media include hard disk drives (HDDs), solid-state drives (SSDs), magnetic tapes, and optical discs. Virtual storage media, such as cloud storage, provide remote and scalable data storage over the internet.

3. Capacity and Scalability: Data storage systems vary in their capacity to hold data. Storage devices can range from gigabytes (GBs) to terabytes (TBs) and even petabytes (PBs) in size. Scalability is essential to accommodate growing data needs, allowing storage capacities to be increased as required.

4. Data Access and Retrieval: Quick and reliable data access is crucial for efficient data storage. Random access memory (RAM) provides fast, temporary storage that enables the computer to access frequently used data quickly. Hard disk drives (HDDs) and solid-state drives (SSDs) allow for the long-term storage of data that can be accessed when needed.

5. Backup and Recovery: Data storage solutions often include mechanisms for backup and recovery. Regular backups ensure that data is duplicated and preserved in case of accidental deletion, hardware failure, or other disruptions. Recovery procedures allow for the restoration of data from backups to minimize data loss.

6. Data Compression: Data compression techniques are used to reduce storage requirements. Compression algorithms reduce the size of data files, allowing more efficient utilization of storage space. Compressed data can be decompressed to its original form when needed for processing or analysis.

7. Data Security: Data storage includes measures to ensure data security and integrity. Encryption techniques protect sensitive data from unauthorized access, providing an additional layer of security. Access control mechanisms, such as user authentication and permissions, safeguard data by restricting access to authorized individuals.

8. Redundancy and Fault Tolerance: Redundancy is used in storage systems to minimize the risk of data loss. Data redundancy involves creating multiple copies of data, stored on separate storage devices or locations. This redundancy provides fault tolerance, ensuring that data remains accessible even in cases of hardware failure or data corruption.

9. Database Management Systems (DBMS): DBMS software is used to manage large volumes of structured data. DBMS organize data into tables with defined relationships and provide mechanisms for efficient querying and data retrieval. They ensure data consistency, integrity, and security in multi-user environments.

10. Cloud Storage: Cloud storage has become increasingly popular due to its scalability, accessibility, and cost-effectiveness. Data is stored on remote servers maintained by cloud service providers. Cloud storage allows for easy access to data from multiple devices and provides the flexibility to scale storage capacities as needs change.

Data storage is a critical component of computer technology, ensuring that information is securely stored and easily accessible. With the advent of advanced storage technologies and increasing data volumes, effective data storage solutions are crucial for businesses and individuals alike.

Artificial Intelligence and Machine Learning

Artificial Intelligence (AI) and Machine Learning (ML) have revolutionized computer technology by enabling machines to simulate intelligent behavior and learn from data. AI and ML are interdisciplinary fields that focus on developing algorithms and systems that can perform tasks traditionally done by humans. This section explores the significance and key aspects of AI and ML in computer technology.

1. Understanding AI and ML: AI refers to the development of computer systems that can perform tasks requiring human intelligence, such as speech recognition, decision-making, and problem-solving. ML is a subset of AI that focuses on creating algorithms that enable machines to learn and improve from experience. ML algorithms learn patterns and make predictions by analyzing large datasets.

2. Neural Networks: Neural networks are a key component of AI and ML. They are computational models inspired by the structure and function of the human brain. Neural networks process data through interconnected nodes, or artificial neurons, to recognize patterns and make decisions. Deep neural networks, or deep learning, have multiple layers of interconnected neurons and are used for complex tasks like image and speech recognition.

3. Natural Language Processing (NLP): NLP is a branch of AI that focuses on enabling computers to understand, interpret, and generate human language. NLP algorithms analyze and process text and speech data, enabling tasks such as language translation, sentiment analysis, and chatbots.

4. Computer Vision: Computer vision is a field of AI that enables machines to analyze and interpret visual information from images or videos. Computer vision algorithms extract and understand meaning from visual data, enabling applications such as object recognition, image classification, and video tracking.

5. Recommendation Systems: AI and ML play a significant role in recommendation systems, which provide personalized suggestions based on user preferences and past behavior. Recommendation systems are used in e-commerce platforms, streaming services, and social media networks to enhance user experiences and improve customer satisfaction.

6. Autonomous Systems: AI and ML power the development of autonomous systems, which can perform tasks without human intervention. Self-driving cars, drones, and robots are examples of autonomous systems that rely on AI and ML algorithms to interpret their surroundings, make decisions, and navigate their environments safely.

7. Data Analysis and Insights: ML algorithms analyze large datasets to discover patterns, trends, and insights that might not be readily apparent to humans. This allows businesses to make data-driven decisions, optimize processes, and improve operations. ML is used in finance, healthcare, marketing, and various other industries to leverage the power of data.

8. Natural Language Generation (NLG): NLG is an AI technology that converts structured data into human-readable text. NLG systems generate reports, summaries, and narratives, enabling automated generation of content that is coherent and contextually accurate. NLG has applications in data analysis, business intelligence, and content creation.

9. Reinforcement Learning: Reinforcement learning is a branch of ML that involves agents learning how to make decisions based on interaction with an environment. Agents receive feedback and rewards for their actions, allowing them to learn optimal strategies through trial and error. Reinforcement learning has been successfully used in areas such as gaming, robotics, and resource optimization.

10. Ethical Considerations: The development and deployment of AI and ML systems raise ethical considerations. As these technologies become more powerful, there is a need to address issues such as data privacy, algorithmic bias, and transparency. Ensuring ethical AI and ML practices is crucial to mitigate potential risks and ensure the responsible use of these technologies.

AI and ML have the potential to revolutionize industries, improve decision-making processes, and enhance user experiences. As technology advances, AI and ML will continue to play a pivotal role in shaping the future of computer technology.

Cybersecurity and Privacy

In today’s interconnected digital world, cybersecurity and privacy are critical concerns in computer technology. The increasing reliance on technology and the vast amounts of data being generated and shared have created new vulnerabilities and risks. This section explores the significance and key aspects of cybersecurity and privacy.

1. Threat Landscape: The threat landscape in cybersecurity is constantly evolving, with new cyber threats and attacks emerging regularly. Cybercriminals employ a variety of techniques, such as malware, phishing, ransomware, and social engineering, to compromise systems and steal sensitive information. It is crucial to stay updated with the latest threats and employ robust security measures to protect against them.

2. Risk Management: Cybersecurity involves identifying, assessing, and managing risks to information and computer systems. Risk management strategies include implementing security controls, conducting regular risk assessments, and developing incident response plans. By understanding potential vulnerabilities and taking proactive measures, organizations can minimize the impact of cybersecurity incidents.

3. Encryption: Encryption is a fundamental technique used to safeguard data from unauthorized access. It involves transforming data into an unreadable form using cryptographic algorithms. Encryption ensures the confidentiality and integrity of data, making it difficult for hackers to decipher even if they intercept it.

4. Authentication and Access Control: Authentication mechanisms, such as passwords, biometrics, and two-factor authentication, verify the identity of users before granting them access to systems and data. Access control ensures that only authorized individuals can access confidential information, protecting against unauthorized access and data breaches.

5. Security Software: Security software, such as antivirus and antimalware programs, firewalls, and intrusion detection systems, play a crucial role in defending against cyber threats. These tools detect and prevent malicious activities, including viruses, malware, and unauthorized network access, safeguarding computers and networks.

6. Privacy Protection: Privacy is a key concern in computer technology. Data privacy involves collecting, using, and storing personal information in compliance with applicable regulations and industry standards. Privacy protection mechanisms, such as data anonymization and consent management, ensure that individuals have control over their personal data and are aware of how it is used.

7. Secure Coding Practices: Secure coding practices are critical in software development to prevent vulnerabilities that can be exploited by cyber attackers. Following secure coding guidelines, such as avoiding software bugs, input validation, and using encryption for sensitive data, can significantly reduce the risk of security breaches.

8. Security Education and Awareness: Educating users and raising awareness about cybersecurity threats is essential. Regular security training can help individuals understand the importance of strong passwords, recognizing phishing attempts, and practicing safe online behavior. Increased awareness empowers users to take active roles in protecting their data and systems.

9. Incident Response and Recovery: Despite preventive measures, cybersecurity incidents can occur. Having an incident response plan in place ensures an organized and effective response when an incident occurs. Incident response includes identifying and containing the incident, investigating the root cause, and initiating recovery measures to restore systems and data.

10. Compliance and Regulations: Compliance with cybersecurity regulations and industry standards is crucial for protecting sensitive data and meeting legal requirements. Regulations, such as the General Data Protection Regulation (GDPR) and the Health Insurance Portability and Accountability Act (HIPAA), mandate organizations to implement specific security measures and safeguard customer information.

Ensuring robust cybersecurity measures and protecting privacy are essential in today’s digital landscape. By implementing comprehensive security practices, organizations and individuals can mitigate risks, safeguard information, and maintain trust in the digital ecosystem.

Future Trends and Developments

The field of computer technology is continually evolving, driven by advancements in technology and changing user needs. This section explores some of the future trends and developments that are shaping the future of computer technology.

1. Artificial Intelligence Advancements: Artificial Intelligence (AI) is expected to continue advancing, enabling machines to perform even more complex tasks. AI algorithms, such as deep learning, will continue to improve, allowing for more accurate speech recognition, image processing, and natural language understanding. AI will find applications in various industries, including healthcare, finance, and autonomous systems.

2. Internet of Things (IoT) Expansion: The Internet of Things is set to expand further, with more devices and objects becoming connected. IoT technology will penetrate various industries, ranging from smart homes and wearable devices to industrial automation and smart cities. The increased connectivity will lead to improved efficiency, enhanced monitoring capabilities, and new opportunities for data-driven insights.

3. Edge Computing: Edge computing involves moving data processing and storage closer to the source, reducing the need for centralized computing infrastructure. This trend aims to address latency, bandwidth limitations, and privacy concerns. Edge computing will facilitate real-time data analysis and decision-making, benefiting applications that require low latency, such as autonomous vehicles and industrial automation.

4. Quantum Computing: Quantum computing is an emerging field that leverages quantum physics principles to perform computations at an unprecedented scale. Quantum computers have the potential to solve complex problems, such as cryptography and optimization, much faster than classical computers. While still in its early stages, quantum computing holds promise for revolutionizing various fields, including drug discovery, weather modeling, and artificial intelligence.

5. Virtual and Augmented Reality: Virtual reality (VR) and augmented reality (AR) technologies are expected to become more immersive and seamlessly integrated into various applications. VR is revolutionizing gaming, education, and training by providing immersive virtual environments. AR overlays digital information onto the real world, enhancing experiences in fields such as healthcare, retail, and industrial maintenance.

6. 5G and Next-Generation Networks: The rollout of 5G networks will enable faster internet speeds and low-latency communication. This will unleash new opportunities for real-time applications, such as autonomous vehicles, remote surgeries, and augmented reality experiences. Next-generation networks will support the growing demand for bandwidth-intensive applications, IoT connectivity, and the proliferation of connected devices.

7. Data Analytics and Machine Learning: With the increase in data generation, the importance of data analytics and machine learning will only grow. Organizations will harness the power of big data to derive actionable insights, predict trends, and optimize decision-making processes. Machine learning algorithms will continue to evolve, improving accuracy and enabling automated data analysis and decision-making at scale.

8. Cybersecurity Advancements: As cyber threats continue to evolve, advancements in cybersecurity will be crucial. Stronger encryption, advanced threat detection techniques, and proactive defense mechanisms will be developed to safeguard against sophisticated attacks. Artificial intelligence and machine learning will play a significant role in detecting and responding to threats in real-time.

9. Green Computing: The focus on sustainability and energy efficiency will drive developments in green computing. Energy-efficient hardware, power management systems, and renewable energy sources will be utilized to minimize the environmental impact of computing operations. Cloud computing and virtualization will contribute to reducing energy consumption and carbon emissions.

10. Human-Computer Interaction: Future developments will seek to enhance human-computer interaction, making technology more intuitive and seamlessly integrated into our daily lives. Natural user interfaces, such as voice and gesture recognition, will enable more natural and effortless interactions with computers. Brain-computer interfaces and haptic feedback technologies aim to bridge the gap between humans and machines even further.

The future of computer technology holds exciting possibilities. Advancements in AI, IoT, quantum computing, and other emerging technologies will continue to shape the way we work, communicate, and live. Embracing these trends and developments will unlock new opportunities and drive innovation in the digital age.